85% of AI Resume Screeners Prefer White Names: Why 2025 Is The Year Hiring Discrimination Lawsuits Exploded (A Comprehensive Research Report)

Last Updated: April 23, 2026

Every day, millions of Americans submit job applications that never reach human eyes.

The rejection happens in milliseconds. An algorithm scans your resume, assigns you a score, and decides you’re not worth interviewing. You’ll never know why. The system flagged something in your background, your school, or perhaps just your name.

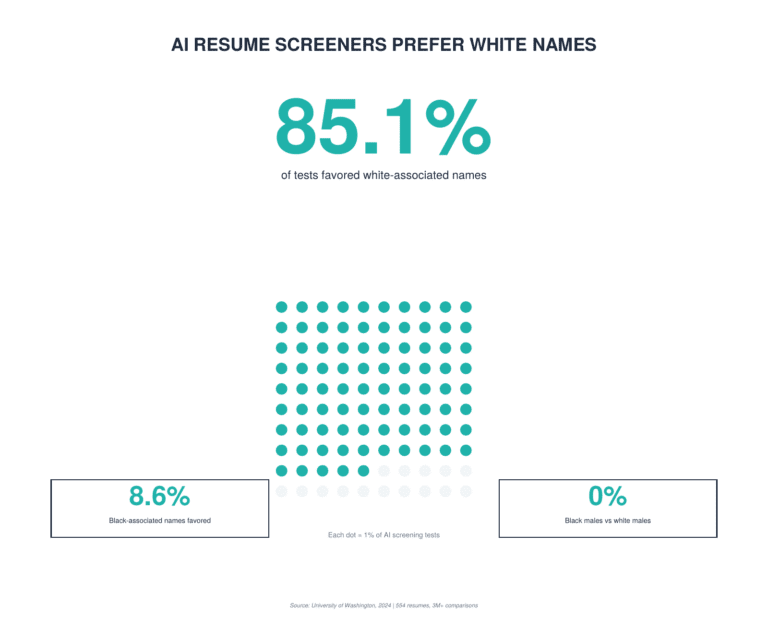

This isn’t speculation. Researchers proved it with a single devastating statistic: AI resume screeners prefer white-associated names 85.1% of the time.

And in 2025, that proof triggered a legal earthquake.

We spent weeks mapping every major AI hiring discrimination lawsuit filed or advancing through the courts in 2024 and 2025. What we found is a system in crisis, with courts finally catching up to technology that’s been discriminating at scale for years.

Here’s everything you need to know about the AI hiring lawsuit wave and what it means for your job search.

☑️ Key Takeaways

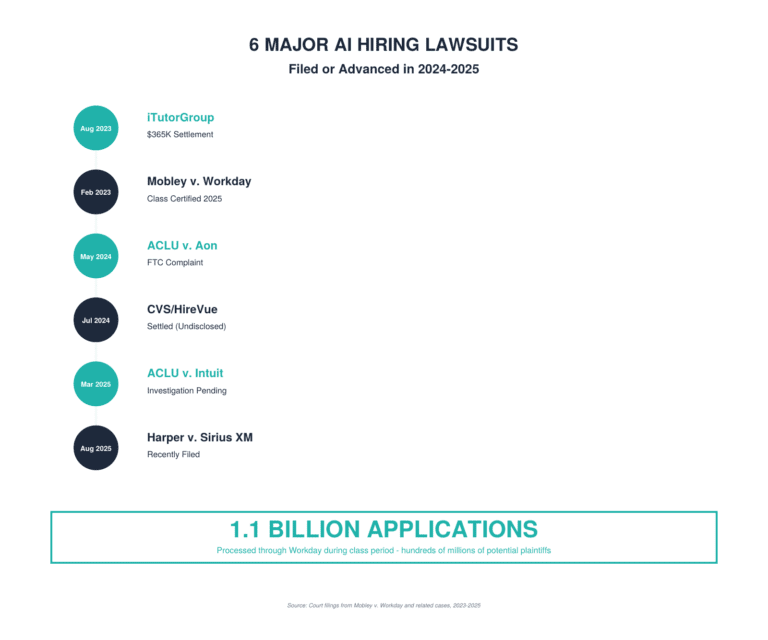

- AI hiring lawsuits are reshaping recruitment: At least six major discrimination cases were filed or progressed in 2024-2025, with the landmark Mobley v. Workday case potentially covering millions of job seekers over age 40.

- The bias is measurable and severe: Research shows AI resume screening tools prefer white-associated names in 85.1% of cases, while Black male candidates were disadvantaged in 100% of direct comparisons with white males.

- Both vendors and employers face liability: Courts established that AI tool providers can be sued directly as “agents” under employment discrimination laws, not just the companies using them.

- 99% of Fortune 500 companies use AI screening: If you’re applying to medium or large companies, you’re almost certainly encountering AI-powered evaluation at some point in the hiring process.

The Stat That Changed Everything: 85% Bias in AI Resume Screening

Before we map the lawsuits, you need to see the evidence that triggered this legal earthquake.

In 2024, researchers at the University of Washington conducted the most comprehensive audit of AI hiring bias ever performed. They tested three state-of-the-art AI resume screening models on 554 real resumes and 571 job descriptions across nine different occupations.

The methodology was simple but devastating. They took identical resumes with the same qualifications, experience, and education. The only thing they changed was the name at the top. They used 120 carefully selected names strongly associated with Black males, Black females, white males, and white females based on linguistic research.

Then they ran over three million comparisons to see which resumes the AI systems ranked higher.

The results shocked even the researchers:

White-associated names were preferred in 85.1% of tests. Black-associated names were preferred in only 8.6% of cases.

But the intersectional analysis revealed something even more disturbing. When researchers looked specifically at Black male candidates versus white male candidates in direct comparisons, the AI systems never preferred the Black male applicant. Not once. In 100% of head-to-head matchups, the white male name won.

“We found this really unique harm against Black men that wasn’t necessarily visible from just looking at race or gender in isolation,” explained lead researcher Aylin Caliskan from the University of Washington.

The study also discovered that bias increased when resumes were shorter. When the AI had less information to work with, demographic signals like names carried even more weight in the algorithm’s decision-making process.

This wasn’t some obscure academic study using outdated models. The three systems tested were among the highest-performing AI tools available at the time: E5-mistral-7b-instruct, GritLM-7B, and SFR-Embedding-Mistral. Companies are using this exact technology right now to screen millions of applications.

Within months of this research going public in late 2024, the lawsuits started accelerating. Plaintiffs’ attorneys suddenly had irrefutable proof that AI discrimination wasn’t theoretical. It was measurable, systematic, and affecting millions of job seekers.

The Perfect Storm: Why 2025 Became The Tipping Point for AI Discrimination Lawsuits

That 85% bias rate didn’t exist in a vacuum. Three converging forces turned 2025 into the year AI hiring discrimination lawsuits exploded.

First, AI adoption hit critical mass at exactly the wrong time. By early 2025, 48% of hiring managers were using AI to screen resumes, up from just 12% in 2020. That number is expected to hit 83% by the end of 2025. An estimated 99% of Fortune 500 companies now use some form of AI-powered applicant tracking system or screening tool.

When a technology touches hundreds of millions of job applications annually, even small biases create massive discriminatory impact. What would take human recruiters years to accomplish in terms of systematic exclusion, AI achieved in months.

Second, researchers proved the bias at the exact moment courts were ready to listen. The University of Washington study we just examined wasn’t published in some obscure journal. It was presented at the prestigious AAAI/ACM Conference on Artificial Intelligence, Ethics, and Society in October 2024.

Major media outlets picked up the story. The 85.1% statistic became impossible to ignore. Suddenly, plaintiffs’ attorneys had peer-reviewed, published research they could cite in court filings. Judges couldn’t dismiss AI bias as speculation anymore. The evidence was quantified, replicated, and devastating.

Third, courts started allowing these cases to proceed at a critical moment in 2024-2025. In July 2024, a federal judge ruled that AI vendors themselves could be held liable for discrimination, not just the employers using their tools. Then in May 2025, the landmark Mobley v. Workday case achieved class certification, potentially covering millions of applicants.

These weren’t isolated rulings. They represented a fundamental shift in how courts view algorithmic decision-making. Judges were rejecting the argument that AI tools are neutral intermediaries. Instead, courts increasingly recognized that when an algorithm makes decisions about who gets hired, that algorithm can be sued for discrimination.

The legal precedent is now set. The evidence is overwhelming. And by mid-2025, frustrated job seekers were finding attorneys willing to take these cases.

The Complete Database: Every Major AI Hiring Discrimination Case from 2024-2025

Between early 2024 and late 2025, we tracked six major cases that define this legal wave. Some settled quickly. Others are still progressing through the courts with potential damages in the billions. Here’s what you need to know about each one.

1. Mobley v. Workday, Inc. (February 2023 – Present)

Status: Class action certified May 2025, discovery ongoing

Court: U.S. District Court for the Northern District of California

Plaintiffs: Derek Mobley and four additional opt-in plaintiffs on behalf of millions of job applicants

Claims: Age discrimination (ADEA), race discrimination (Title VII), disability discrimination (ADA)

This is the big one. Derek Mobley, an African American man over 40 with a disability, applied to more than 100 jobs through companies using Workday’s AI screening platform. He was rejected every single time without an interview.

Workday’s AI system analyzes resumes and automatically recommends whether employers should accept or reject candidates. Mobley claimed the algorithms discriminated based on age, race, and disability by relying on biased training data and employer preferences that screen out protected classes.

The game-changing development: In July 2024, Judge Rita Lin denied Workday’s motion to dismiss, ruling that Workday could be held liable as an “agent” of employers even though Workday itself wasn’t hiring anyone. The court reasoned that because Workday’s AI participated in hiring decisions rather than simply implementing employer criteria, it could face direct liability.

Then in May 2025, the court certified the case as a nationwide collective action under the Age Discrimination in Employment Act. The potential class includes everyone age 40 or older who applied through Workday’s platform since September 2020 and was denied employment recommendations.

According to court filings, Workday represented that 1.1 billion applications were processed through its system during the relevant period. The collective could potentially include hundreds of millions of applicants.

Why it matters for job seekers: If this case succeeds, it will establish that AI screening systems can be treated as a “unified policy” even when used by different companies for different positions. That means other AI tools could face similar class actions, and vendors will be forced to conduct serious bias audits or face catastrophic legal exposure.

2. EEOC v. iTutorGroup, Inc. (May 2022 – August 2023)

Status: Settled for $365,000

Court: U.S. District Court for the Eastern District of New York

Plaintiffs: Equal Employment Opportunity Commission on behalf of 200+ rejected applicants

Claims: Age discrimination (ADEA)

This was the EEOC’s first-ever AI discrimination lawsuit and settlement. iTutorGroup, which provides English tutoring services to students in China, programmed its hiring software to automatically reject female applicants age 55 or older and male applicants age 60 or older.

The discrimination came to light when one applicant submitted two identical applications with different birth dates. Only the application with the younger birth date received an interview invitation.

Under the settlement terms, iTutorGroup paid $365,000 to affected applicants, implemented anti-discrimination policies, conducted mandatory training, and invited all previously rejected applicants to reapply.

Why it matters for job seekers: This case proved that even relatively simple algorithmic screening (not sophisticated AI) violates federal law when it automatically excludes protected classes. The EEOC sent a clear message: “even when technology automates the discrimination, the employer is still responsible.”

3. ACLU Complaint Against Intuit and HireVue (March 2025)

Status: Investigation pending with EEOC and Colorado Civil Rights Division

Court: Administrative complaint (not yet federal lawsuit)

Plaintiff: D.K., a deaf Indigenous woman

Claims: Disability discrimination (ADA), race discrimination (Title VII), Colorado Anti-Discrimination Act violations

D.K. worked for Intuit in seasonal roles since 2019, receiving positive feedback and bonuses. When she applied for a promotion to Seasonal Manager in 2024, Intuit required her to complete an AI video interview through HireVue’s platform.

She requested human-generated captioning as a reasonable accommodation. Intuit denied the request, stating HireVue’s automated subtitles would suffice. In practice, portions of the interview lacked subtitles entirely, forcing her to rely on error-prone browser auto-captioning.

The AI system gave her low scores and recommended she “practice active listening.” She didn’t get the promotion.

The complaint alleges HireVue’s automated speech recognition technology performs worse for deaf applicants and for speakers of non-white English dialects, including Native American English. Research shows these systems often have difficulty accurately recognizing and analyzing speech patterns that differ from standard American English.

Why it matters for job seekers: This case raises a critical question courts haven’t fully answered: do accessibility and accommodation requirements apply to AI tools the same way they apply to human interviewers? If companies must provide accommodations for AI-driven interviews, it could force major changes in how these tools are deployed.

4. ACLU Complaints Against Aon Consulting (May 2024)

Status: FTC complaint and EEOC charges pending

Agencies: Federal Trade Commission and Equal Employment Opportunity Commission

Plaintiff: Biracial autistic job applicant and similarly situated applicants

Claims: Deceptive marketing (FTC Act), disability discrimination, race discrimination

This case targets three widely-used Aon hiring tools: ADEPT-15 (personality assessment), vidAssessAI (automated video interviewing), and gridChallenge (cognitive ability test).

The ACLU alleges these tools are deceptively marketed as “bias-free” and able to “improve diversity” when research shows they likely discriminate against people with autism, mental health disabilities, and racial minorities.

The personality test problem: ADEPT-15 measures traits like “reading people’s emotions” and “emotional awareness.” The test asks questions nearly identical to those used by healthcare professionals to diagnose autism. People who score lower on these measures are more likely to be screened out, creating systematic discrimination against autistic applicants.

The video interview problem: vidAssessAI uses AI to analyze applicants’ speech patterns, facial expressions, and personality traits. Research shows automated speech recognition systems perform worse for non-white speakers and people with disabilities affecting speech.

The cognitive ability problem: Aon’s own data shows that Black, Hispanic, Latino, and Asian test-takers all scored lower on average than white test-takers on gridChallenge, with the largest gap for Black applicants.

Why it matters for job seekers: The FTC angle is new and powerful. If vendors can be held liable for false advertising when they claim their AI tools eliminate bias, it creates a strong incentive for companies to actually test their products for discrimination rather than just making marketing claims.

5. CVS/HireVue Settlement (July 2024)

Status: Privately settled

Court: Massachusetts state court

Plaintiff: Job applicant

Claims: Violation of Massachusetts law prohibiting lie detector tests in employment

A CVS job applicant claimed the company violated Massachusetts law by requiring prospective employees to take HireVue video interviews that used Affectiva’s AI technology to track facial expressions and assign an “employability score.”

The lawsuit alleged the system measured facial movements like smiles and smirks to assess traits including “conscientiousness and responsibility” and “innate sense of integrity and honor.” Under Massachusetts law, this type of analysis could qualify as an illegal lie detector test.

CVS settled the case privately, and terms were not disclosed.

Why it matters for job seekers: This case shows that AI hiring tools face legal challenges beyond federal discrimination law. State-level consumer protection, privacy, and employment laws create additional liability for companies using invasive AI screening methods.

6. Harper v. Sirius XM Radio, LLC (August 2025)

Status: Recently filed, early stages

Court: U.S. District Court for the Eastern District of Michigan

Plaintiff: Unsuccessful Black job applicant

Claims: Race discrimination under federal anti-discrimination statutes

The most recent case in our database involves a Black applicant who claims Sirius XM’s AI hiring tool discriminated against him based on race. The lawsuit alleges the company’s AI system relied on historical hiring data that perpetuated past biases, resulting in his application being downgraded despite his qualifications.

This case is still in its earliest stages, but it represents the continuing wave of litigation against employers using AI screening.

Why it matters for job seekers: Even in late 2025, new AI discrimination cases continue to be filed. This isn’t a problem that’s been solved or will go away quietly.

Beyond the 85%: Additional Research Proving AI Bias Is Systematic

The University of Washington study we examined earlier isn’t an outlier. Multiple academic institutions have documented systematic bias in AI hiring tools throughout 2024 and 2025. The evidence is converging from every direction.

The University of Washington Deep Dive: Why 85.1% Matters

Let’s dig deeper into what that 85.1% bias rate actually means in practice.

Researchers Kyra Wilson and Aylin Caliskan didn’t just document that bias exists. They revealed how it operates at different levels of the hiring funnel. The AI systems they tested convert resumes into numerical representations, then measure how closely candidates match job descriptions using “cosine similarity” scoring.

This is the same technology companies use to screen millions of real applications. When the algorithm gives your resume a lower similarity score because of your name, you never make it past the initial screening. No human ever sees your application.

The occupational analysis revealed bias everywhere: The discrimination wasn’t limited to certain job types. From marketing managers to engineers to teachers, AI systems consistently preferred white-associated names across all nine occupations tested.

University of Hong Kong Study: Consistent Patterns Across Multiple AI Models

A May 2025 study from researchers at the University of Hong Kong and the Chinese Academy of Sciences tested five leading large language models on resume evaluation. The findings were consistent: most models awarded lower scores to Black male candidates compared to white male candidates with identical qualifications.

The researchers noted that anti-Black male biases were “deeply embedded in how current AI systems evaluate candidates,” suggesting these patterns exist across different AI architectures and training approaches.

The Mechanisms of AI Bias

How does AI learn to discriminate? Two primary mechanisms drive bias in hiring algorithms:

- Biased training data: AI systems learn patterns from historical data. If past hiring decisions reflected human biases (which research consistently shows they did), the AI will replicate and amplify those biases. When a company trains AI on resumes of previously successful employees, the system learns that candidates who look like past employees are “better,” perpetuating homogeneity.

- Proxy discrimination: Even when AI doesn’t directly use protected characteristics like race or age, it can use proxy variables that correlate with protected classes. School names, zip codes, gaps in employment, and even word choices in cover letters can serve as proxies for race, socioeconomic status, or disability.

For example, attending a historically Black college might correlate with being Black, so an AI trained on data where few HBCU graduates were hired might learn to downgrade those applicants. The system never explicitly considers race, but it achieves the same discriminatory outcome.

Who Gets Sued: Vendors vs. Employers

One of the most significant developments in 2024-2025 was courts establishing that AI vendors themselves can face direct liability for discrimination.

Previously, the assumption was that only employers could be sued under federal anti-discrimination laws. After all, the employer makes the final hiring decision, right?

Not anymore.

In the Mobley v. Workday case, Judge Rita Lin rejected that reasoning. She wrote: “Workday’s role in the hiring process is no less significant because it allegedly happens through artificial intelligence rather than a live human being who is sitting in an office going through resumes manually to decide which to reject.”

The court continued: “Drawing an artificial distinction between software decisionmakers and human decisionmakers would potentially gut anti-discrimination laws in the modern era.”

This changes everything. Now both AI vendors and the employers using their tools face legal exposure:

AI vendors can be sued as “agents” of employers under Title VII, the ADEA, and the ADA. If your AI tool systematically screens out protected classes, you’re liable even if you don’t employ those candidates yourself.

Employers remain liable for their AI tools under the “employer” provisions of anti-discrimination laws. You can’t outsource your legal obligations by hiring a third party to screen candidates.

Both can be named in the same lawsuit. Plaintiffs’ attorneys are increasingly naming both the vendor and the employer as co-defendants, maximizing pressure for settlement.

The EEOC has been explicit about this dual liability framework. If a vendor’s tool screens out protected classes at disproportionate rates, both the vendor and the employer using it can face enforcement actions.

The Demographics of Discrimination: Who’s Being Harmed

The lawsuits reveal clear patterns in who faces discrimination from AI hiring tools.

Age Discrimination (40+)

Age discrimination represents the largest class of potential plaintiffs. The Mobley v. Workday collective potentially includes millions of applicants over 40.

AI systems often discriminate against older workers through several mechanisms:

- Recency bias: Algorithms prioritize recent education, recent job changes, and current technical skills, all of which tend to disadvantage older workers with longer, more stable career histories.

- Cultural fit proxies: Systems trained on data from companies with young workforces learn to prefer candidates who match that demographic.

- Resume screening patterns: Longer resumes with more experience can be scored lower than shorter resumes because the AI interprets length as confusion or lack of focus.

One applicant in the Mobley case reported being rejected at 1:50 a.m., less than one hour after submitting his application. The speed of rejection suggests pure algorithmic screening with no human review.

If you’re over 40 and experiencing immediate rejections from large companies using applicant tracking systems, you’re not alone. And increasingly, you have legal recourse.

Race Discrimination

Multiple studies confirm that AI systems discriminate against Black applicants, particularly Black men.

The discrimination operates through several channels:

- Name-based screening: Resumes with Black-associated names like Jamal, Lakisha, DeShawn, or Ebony receive lower scores than identical resumes with white-associated names like Brad, Emily, Greg, or Anne.

- School-based screening: AI trained on companies that historically didn’t recruit from HBCUs may learn to downgrade applicants from those institutions.

- Zip code proxies: If certain zip codes correlate with race and the training data shows few successful candidates from those areas, the AI learns to deprioritize those addresses.

- Speech pattern analysis: Video interview AI that analyzes speech patterns, accents, and word choices can discriminate against speakers of African American Vernacular English or other non-standard dialects.

The research is unambiguous: current AI hiring systems create significant barriers for Black job seekers at scale.

Disability Discrimination

The cases against Aon and Intuit/HireVue highlight how AI tools discriminate against people with disabilities.

- Autism and mental health disabilities: Personality tests that measure social skills, emotional reading, and communication style systematically screen out autistic individuals and people with social anxiety, depression, or other mental health conditions.

- Deaf and hard of hearing: Video interview platforms that rely on speech recognition without proper captioning create insurmountable barriers for deaf applicants.

- Cognitive disabilities: Gamified cognitive tests and timed assessments discriminate against people with ADHD, dyslexia, and processing disorders.

The legal issue becomes particularly sharp when companies refuse to provide accommodations. If a deaf applicant requests human-generated captioning for an AI video interview and the company denies that request, they face clear ADA liability.

Intersectional Discrimination

Perhaps the most disturbing finding from recent research is how discrimination compounds at intersections of identity.

Black women face unique barriers different from those faced by either Black men or white women. The AI doesn’t just add race bias plus gender bias. It creates new patterns of discrimination specific to Black women’s resumes.

Similarly, older Black women with disabilities face triple discrimination that’s greater than the sum of its parts.

This intersectional discrimination is harder to detect and measure, but it’s showing up in both research studies and legal complaints.

Settlement Amounts and the True Cost of AI Discrimination

What’s the financial exposure for companies using biased AI tools?

Based on the cases we mapped, here’s the cost breakdown:

- Direct settlements: iTutorGroup paid $365,000 for discriminating against 200+ applicants. That works out to roughly $1,800 per affected applicant. CVS settled for undisclosed terms.

- Potential class action damages: If Workday ultimately settles or loses, the damages could be astronomical. With potentially hundreds of millions of class members and per-applicant damages in the thousands, we’re talking about a settlement that could exceed $1 billion.

- Bias audit costs: Companies responding to this litigation are conducting bias audits of their AI systems. Professional audits cost $50,000 to $200,000 per AI system.

- Remediation costs: Fixing a biased algorithm requires retraining on better data, implementing new safeguards, and conducting ongoing testing. Estimate $100,000+ for significant remediation.

- Reputation damage: When news breaks that your company’s AI discriminates against Black applicants or older workers, the brand damage can be incalculable. Top diverse candidates won’t want to apply, and your employee base questions whether their own hiring was fair.

- Regulatory scrutiny: After an AI discrimination lawsuit, expect heightened attention from the EEOC, FTC, state attorneys general, and other regulators looking for similar problems in your other systems.

The total cost can easily reach millions of dollars even for companies that settle early. For those that fight and lose class certification, the exposure becomes existential.

The State-by-State Regulatory Patchwork

While federal anti-discrimination laws apply everywhere, states are adding their own AI-specific requirements that create additional compliance obligations and legal risk.

Colorado: First State AI Anti-Discrimination Law (May 2024)

Colorado became the first state to enact comprehensive AI anti-discrimination legislation. The law prohibits employers from using AI to discriminate against workers and requires companies to take extensive measures to avoid algorithmic discrimination.

Key provisions:

- Employers must conduct bias audits before deploying AI in hiring

- Job postings must disclose when AI is used in the hiring process

- Applicants have the right to request an alternative selection process

- Strong enforcement provisions with private right of action

The ACLU complaint against Intuit/HireVue specifically invokes Colorado’s law, showing how state statutes create additional bases for litigation.

Illinois: Second State to Regulate AI in Hiring (September 2024)

Illinois passed legislation requiring employers to notify applicants and workers when AI is used for hiring, discipline, discharge, or other employment decisions. The law also prohibits using AI in ways that result in workplace discrimination.

While not as comprehensive as Colorado’s law, Illinois’s statute creates disclosure obligations and liability for companies that don’t comply.

New York City: Local Law 144 (July 2023)

New York City’s Local Law 144 requires employers using “automated employment decision tools” to:

- Conduct bias audits annually

- Publish audit results publicly

- Notify candidates that AI is being used

- Allow candidates to request alternative evaluation methods

However, the law has a significant loophole: it only applies when AI plays a “predominant role” in decisions. Employers can avoid coverage by ensuring human managers maintain nominal decision-making authority.

California, Washington, and Others

Over 30 states have formed AI task forces or committees studying potential regulation. California and Washington are expected to pass comprehensive AI employment laws in the near future.

The patchwork of state laws creates significant compliance challenges for employers recruiting nationally. You need to understand obligations in every state where you hire, and violations can trigger both regulatory enforcement and private lawsuits.

What This Means for Job Seekers: 7 Critical Insights

If you’re looking for a job in 2025, here’s what you need to know about AI discrimination and the lawsuits reshaping hiring.

1. Assume AI Will Screen You First

If you’re applying to Fortune 500 companies or any company with more than 500 employees, your resume will almost certainly encounter AI screening before reaching human eyes.

The systems vary widely. Some just parse your resume for keywords. Others use sophisticated language models to evaluate your entire career narrative against job requirements. Some incorporate video interview analysis, personality testing, and cognitive assessments.

What you should do: Optimize your resume for both AI and humans. That means using clear job titles, incorporating relevant keywords from the job description, and formatting your resume simply so parsing software doesn’t miss key information.

For detailed guidance, check out our complete guide to ATS resume optimization.

2. Name-Based Discrimination Is Real

The 85% bias rate we covered earlier isn’t an anomaly. It’s the reality of how modern AI hiring systems operate.

Resumes with Black-associated names like Jamal, Lakisha, DeShawn, or Ebony receive systematically lower scores than identical resumes with white-associated names like Brad, Emily, Greg, or Anne. The University of Washington study proved this across multiple AI models and nine different occupations.

What you should do: You shouldn’t have to change your name to get a fair shot at a job. That said, some job seekers are using initials instead of first names, or using nicknames that don’t signal race. Others are applying with full names and documenting rejections for potential legal action.

If you believe you’re experiencing name-based discrimination, document everything. Save rejection emails, note application dates and companies, and consider consulting an employment attorney.

3. Age Discrimination Is Widespread and Largely Invisible

If you’re over 40 and getting immediately rejected from jobs you’re qualified for, AI age discrimination is a likely culprit.

The speed of rejection is often the tell. If you get a rejection within hours or minutes of applying, that’s pure algorithmic screening. No human reviewed your application.

What you should do: Consider these strategies to minimize age signals on your resume:

- Remove graduation dates from older degrees

- Focus your work history on the last 10-15 years

- Update your skills section with current technologies and methodologies

- Remove outdated software and systems from your skill list

For more guidance on handling age discrimination in your job search, read our article on career gaps and age proofing your resume.

4. Video Interview AI Is Particularly Problematic

AI that analyzes your facial expressions, tone of voice, and word choices during video interviews is scientifically questionable and legally risky for employers.

These systems claim to measure personality, honesty, and job fit based on micro-expressions and speech patterns. Research consistently shows they perform worse for non-white applicants, people with disabilities, and anyone who doesn’t conform to narrow communication norms.

What you should do: If a company requires an AI video interview:

- Research the specific platform being used (HireVue is the most common)

- Practice extensively so your delivery is smooth and confident

- Speak clearly and make direct eye contact with the camera

- Consider whether you’re comfortable working for a company that uses such invasive screening

For help preparing for AI-powered interviews, check out our video interview optimization guide.

5. You May Have Legal Recourse

If you believe you’ve been discriminated against by AI hiring tools, you have options.

Document everything:

- Save all application confirmations and rejection emails

- Note dates, companies, and positions applied for

- Screenshot job postings before they’re taken down

- Keep copies of the resume and cover letter you submitted

Consider filing a complaint:

- EEOC charges can be filed online at eeoc.gov

- State civil rights agencies also investigate discrimination claims

- The process is free and can lead to investigation and settlement

Consult an attorney:

- Employment discrimination attorneys often work on contingency (you pay nothing unless you win)

- Class action law firms are actively seeking AI discrimination cases

- An attorney can evaluate whether you have a viable claim

6. Some Industries and Roles Face Worse Discrimination

AI bias isn’t distributed evenly across all jobs. Research suggests:

Higher discrimination risk:

- Tech companies (ironic, given they build these systems)

- Finance and consulting

- Roles requiring “cultural fit” or soft skills

- Jobs with subjective evaluation criteria

Lower discrimination risk:

- Highly technical roles with objective skill requirements

- Trades and skilled labor (less likely to use sophisticated AI screening)

- Small companies (less likely to use AI tools at all)

- Government jobs (subject to stricter anti-discrimination oversight)

What you should do: If you’re in a high-risk category, be especially diligent about tailoring your resume to job requirements and following up directly with recruiters when possible.

7. Direct Outreach Still Works

While AI dominates the front door of large companies, human connections still open side doors.

Strategies that bypass AI screening:

- LinkedIn networking with hiring managers and recruiters

- Employee referrals (these usually get prioritized or skip initial AI screening)

- Direct email to department heads

- Industry conferences and networking events

- Informational interviews that build relationships

For comprehensive networking strategies that work in 2025, read our guide on unconventional networking tactics and how to turn cold connections into job referrals.

The Interview Guys Take: Why The Momentum Won’t Stop

Based on our research mapping these lawsuits throughout 2024 and 2025, here’s what we predict for the next 12-24 months.

2025 wasn’t just another year of incremental change. It was the inflection point where everything accelerated. And that acceleration isn’t slowing down.

- More lawsuits, exponentially larger settlements: The Mobley v. Workday class certification in May 2025 opened the floodgates. We’re already seeing new filings in the second half of 2025, and we expect dozens more in 2026. Settlements that were measured in hundreds of thousands in 2023 will grow to millions or even tens of millions of dollars as class sizes expand.

- Vendor liability becomes the norm: Courts are consistently ruling that AI vendors can be sued directly. This will force tool providers to conduct serious bias audits or face catastrophic exposure. Some vendors may exit the market entirely.

- State regulations proliferate: California, Washington, New York, and Massachusetts will likely pass comprehensive AI employment laws in 2025-2026. The patchwork of state requirements will create compliance nightmares for national employers.

- The return of human screening (sort of): Some companies will respond by adding human review to AI recommendations. Others will scale back AI usage to avoid liability. But humans won’t fully replace AI; the volume of applications makes that impossible.

- Bias auditing becomes an industry: Expect rapid growth in companies offering AI bias audits, algorithmic fairness testing, and compliance monitoring. This will become a required service like financial audits.

- Resume screening AI faces scrutiny, other AI gets pass: The lawsuits focus heavily on resume and application screening because that’s where the bias is most measurable. But AI is being deployed throughout hiring: sourcing, interview scheduling, reference checking, and even negotiating offers. Those uses will eventually face similar scrutiny.

- Job seekers gain leverage: As awareness grows, talented candidates will start asking employers about their AI screening practices. Companies with problematic tools will struggle to attract diverse talent.

- The technology improves (slowly): Facing legal pressure and bad publicity, AI vendors will invest more in debiasing techniques. But the research suggests bias is deeply embedded in language models trained on internet data, making it extremely difficult to fully eliminate.

Interview Guys Tip

Here’s something most job seekers don’t realize: You can request information about how AI is being used to evaluate you. While companies aren’t required to provide full algorithmic details, some states mandate disclosure when AI is used in hiring decisions. If you’re rejected and suspect AI discrimination, send a formal request asking whether AI was used to evaluate you and requesting any information the company can provide about that process. Document their response. This creates a record that can be valuable if you later decide to file a complaint or join a class action lawsuit.

How to Protect Yourself: The Complete Action Plan

Based on everything we’ve learned from mapping these lawsuits, here’s your step-by-step action plan for navigating AI-screened job applications:

Before You Apply:

- Research the company’s hiring process: Look for mentions of specific AI tools in job postings, Glassdoor reviews, or news articles. Some companies openly disclose their use of HireVue, Workday, or other platforms.

- Optimize your resume strategically: Review our guide on how AI analyzes your interview and best ATS format for 2025 to ensure your resume can pass initial screening.

- Tailor every application: Generic resumes fail AI screening at much higher rates. Use keywords from the job description naturally throughout your resume. Our job description analyzer can help you identify the most important keywords.

During the Application Process:

- Document everything: Screenshot the job posting, save confirmation emails, and note the date and time of application. This creates evidence if you need to challenge discrimination later.

- Follow up strategically: Don’t just submit and forget. Send a follow-up email to the hiring manager or recruiter expressing continued interest. Human contact can sometimes pull your application out of the AI black hole.

- Seek employee referrals: Many companies prioritize or fast-track referred candidates, sometimes bypassing AI screening entirely. Check out our guide on building authentic relationships with industry leaders on LinkedIn.

If You Face AI Video Interviews:

- Know your rights: In Colorado, you can request an alternative evaluation process. In Illinois and New York City, companies must disclose AI usage. Research your state’s specific protections.

- Prepare thoroughly: Review our video interview tips and practice extensively. AI systems penalize hesitation, unclear speech, and poor eye contact.

- Request accommodations if needed: If you have a disability that affects your performance in AI video interviews, request accommodations in writing. Companies that refuse face serious ADA liability.

After Rejection:

- Analyze the pattern: If you’re getting immediate rejections from multiple companies, especially those known to use AI screening, you may be experiencing algorithmic discrimination.

- Consider legal options: Contact an employment discrimination attorney if you believe you’re being systematically discriminated against. Many work on contingency and are actively seeking AI bias cases.

- File EEOC complaints when warranted: You can file online at eeoc.gov. The process is free, and even if your individual claim doesn’t go anywhere, it contributes to pattern-of-discrimination evidence that helps class actions.

The Bottom Line

2025 will be remembered as the year AI hiring discrimination moved from a technical concern to a legal crisis.

The 85.1% bias rate isn’t theoretical. It’s measurable, replicable, and happening right now to millions of job seekers. Black applicants, older workers, and people with disabilities face systematic exclusion from opportunities before any human ever sees their resume.

The lawsuits we mapped throughout 2024 and 2025 reveal the beginning of a fundamental reckoning. Courts certified class actions. Vendors faced direct liability for the first time. States passed comprehensive regulations. And the legal system finally caught up to the technology.

But here’s the good news: courts are taking this seriously. Class actions are being certified. Vendors are facing direct liability. States are passing laws. The legal system is catching up to the technology, and companies are starting to face real consequences for deploying biased AI tools.

For job seekers, awareness is your first defense. Know that AI is screening you. Understand how it works. Optimize your materials accordingly. Document everything. And if you experience discrimination, you have legal recourse.

The AI hiring lawsuit explosion of 2025 is just the beginning. These cases will reshape how companies recruit, how vendors build their tools, and how job seekers navigate the modern job market for years to come.

Stay informed, protect yourself, and remember: you’re not alone in facing these challenges.

BY THE INTERVIEW GUYS (JEFF GILLIS & MIKE SIMPSON)

Mike Simpson: The authoritative voice on job interviews and careers, providing practical advice to job seekers around the world for over 12 years.

Jeff Gillis: The technical expert behind The Interview Guys, developing innovative tools and conducting deep research on hiring trends and the job market as a whole.

Resources & References

This report draws on comprehensive research from authoritative sources, including industry surveys, labor market analyses, and salary databases current as of Q1-Q4 2025.

Additional Resources:

For more help with your job search and understanding how AI affects hiring, check out these resources:

How Many Companies Are Using AI to Review Resumes? [2025 Data & Statistics]

Mastering AI-Powered Job Interviews: The Complete Guide

The Skills-Based Hiring Playbook (And What It Means for Your Resume)

External Research and Legal Resources:

University of Washington Study: Gender, Race, and Intersectional Bias in Resume Screening

Quinn Emanuel: When Machines Discriminate – The Rise of AI Bias Lawsuits

EEOC Guidance on Use of Artificial Intelligence

ACLU Complaint Against HireVue and Intuit

Fisher Phillips: Comprehensive Review of AI Workplace Law and Litigation

Law and the Workplace: AI Bias Lawsuit Against Workday Reaches Next Stage

ACLU: Files FTC Complaint Against Major Hiring Technology Vendor

Sullivan & Cromwell: EEOC Settles First AI-Discrimination Lawsuit

Class Action Database: AI Job Screening, Interview & Hiring Lawsuits

Reworked: Why AI Hiring Discrimination Lawsuits Are About to Explode

American Bar Association: Navigating the AI Employment Bias Maze

HR Dive: AI hiring software was biased against deaf employees, ACLU alleges

University of Washington News: AI tools show biases in ranking job applicants’ names

NPR: Companies are turning to AI for hiring. That could lead to discrimination

Bloomberg Law: EEOC Settles First-of-Its-Kind AI Bias in Hiring Lawsuit